Tag: Encryption

-

Bribe or ‘Tax’? NSA gives 10milion to RSA for Backdoor Access

Hmm. Hold up. So if we go by this Wikipedia entry.. “Founded as an independent company in 1982, RSA Security, Inc. was acquired by EMC Corporation in 2006 for US$ 2.1 billion and operates as a division within EMC.[5]” People need to understand, this means RSA took around 2% of what they’d make in one…

-

How Online Privacy Tools Are Changing Internet Security

How online privacy tools are changing Internet security and driving the (probably quixotic) quest for anonymity in the digital age. For many of us, the Internet is like a puppy—lovable by design and fun to play with, but prone to biting. We suspect that our digital footprint is being tracked and recorded (true), mined and…

-

Cryptoparty Goes Viral: Pen testers, Privacy Geeks Spread Security to the Masses

Security professionals, geeks and hackers around the world are hosting a series of cryptography training sessions for the general public. The ‘crytoparty’ sessions were born in Australia and kicked off last week in Sydney and Canberra along with two in the US and Germany. Information security experts and privacy advocates of all political stripes have…

-

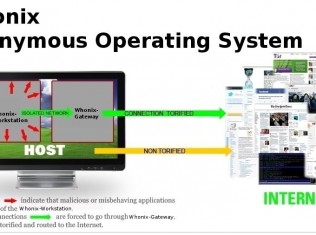

How to secure your computer and surf fully Anonymous BLACK-HAT STYLE

This is a guide with which even a total noob can get high class security for his system and complete anonymity online. But its not only for noobs, it contains a lot of tips most people will find pretty helpfull. It is explained so detailed even the biggest noobs can do it^^ : === The…

-

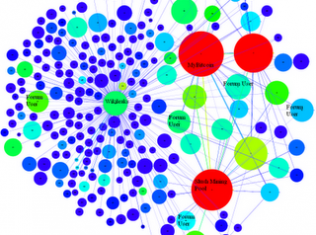

An Analysis of Anonymity in the Bitcoin System

Bitcoin is not inherently anonymous. It may be possible to conduct transactions is such a way so as to obscure your identity, but, in many cases, users and their transactions can be identified. We have performed an analysis of anonymity in the Bitcoin system and published our results in a preprint on arXiv. The Full Story Anonymity…

-

Verifying Claims of Full-Disk Encryption in Hard Drive Firmware

Date: Wed, 9 Nov 2011 10:16:11 +0100 From: Eugen Leitl <eugen[at]leitl.org> To: cypherpunks[at]al-qaeda.net Subject: Re: [p2p-hackers] Verifying Claims of Full-Disk Encryption in Hard Drive Firmware —– Forwarded message from Tom Ritter <tom[at]ritter.vg> —– From: Tom Ritter <tom[at]ritter.vg> Date: Tue, 08 Nov 2011 19:51:53 -0500 To: p2p-hackers[at]lists.zooko.com Subject: Re: [p2p-hackers] Verifying Claims of Full-Disk Encryption in…